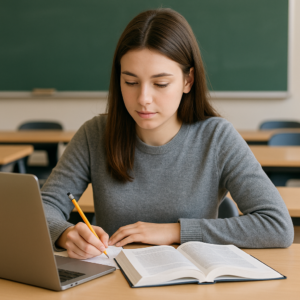

Hire Someone to Do My Statistics Assignment – 100% Accurate Solutions

Having trouble with your statistics assignment? You ain’t the only one and you don’t need to suffer it all alone. Whether it’s regressions, t-tests, probabilities or something more complex like panel data or ANOVA, I can help you get the thing done right and with accuracy.

Professional STATA Consultants

Expert guidance for accurate statistical results and error-free solutions.

Comprehensive STATA Services

All STATA tasks covered, from data cleaning to advanced modeling.

Reliable STATA Support

Timely, trusted help for homework, assignments, and research.

Advanced Data Analytics in STATA

High-level forecasting, regression, panel data, and biostatistics.

Lot of students come to me when they’re confused, out of time, or just can’t wrap their head around the formulas. That’s when it helps to have someone experienced who can solve it step-by-step and send back clean answers. Not just correct, but understandable too. I do each question based on what your prof wants no copied stuff, no generic answers. I use STATA, SPSS, R, Excel or even solve manually if that’s what fits. And I always try to explain things so you can still learn from it. Got short deadline? No problem. Your data and work stays private. So yeah, if you’re asking, Can someone do my stats assignment? then yes, I can. And I’ll do it right and on time.

Expert Statisticians Deliver Step-by-Step Answers

When you’re stuck with stats homework and don’t know where to start from, Data Cleaning Management getting help from someone who knows what they doing makes a huge difference. Me and my team of statisticians not only give you the final answer, we break down every single step so you actually understand what’s going on. Doesn’t matter if it’s a regression, ANOVA, logistic, t-test or something else we pick the right method, use the proper formula and explain why we’re doing each part. If you need software like R, SPSS, Python, STATA or Excel, we include screenshots or code and write notes to make it all make sense. Each solution is organized first the question, then steps, then explanation. That way, even if you forgot the class, you still follow what’s happening. Students always say our steps made them learn better. And yeah, that’s the point not just submitting but actually getting it. So if you’re stressed or stuck, let someone who knows how to solve guide you through. We got you.

Guaranteed Accuracy With Proper Formulas & Interpretation

Accuracy in stats is not just something nice to have it’s the main thing. If your using the wrong formula, even by a little, the result will be messed up and might make no sense. That’s why I always do my best to get the math part right and also explain what it means in a normal way. Sometimes people just press buttons in SPSS or R and get outputs without even knowing what test they done. But that’s risky. Like you gotta know if it’s a one-tail or two tailed test, or should you use non-parametric instead. These are small stuff but important. Also, I don’t just say ‘p < 0.05’ and move on. I tell you what does that p-value mean for your question. Does it support your hypothesis or not? How much can you trust the result? That’s the kind of clarity I try give. So yeah, if you want things done good and explained not too complicated, I got you.

Get Help With Assignments, Projects, Labs & Case Studies

A lot of students come to me when things are messy, or time is almost over. I help with all steps data cleaning, Panel Data Fixed Effects RE DID Models picking right method, doing the analysis, and making sure the answers look good and make sense. Even if you already started and got stuck in middle, I can jump in and sort it out. And I try my best to match your university’s format or style. I don’t just give answers. I explain them too so you actually learn from the work you submit. So yeah, if your project or lab or anything is giving you headache, send it over. I’ll help you figure it all out and finish on time.

Top-Rated Statistics Homework Help With Original Work (No Plagiarism)

When you dealing with stats assignments, it’s not just about getting the answers right it’s also about making sure its original. Copy-paste stuff can really mess up your grade and reputation. That’s why I offer top-rated statistics help with 100% original work, no plagiarism ever. Whether you doing regression, probability questions, t-tests, or anything in R, SPSS, STATA, or Excel I build every solution from scratch. No templates, no repeat answers from some old file. I read the instructions, Regression Analysis OLS Logistic Models work the data, and explain it step-by-step. A lot of students got burned before by fake tutors who send copied answers. I don’t do that. I make sure your homework is clean, unique, and Turnitin won’t flag it. If citations needed, I add those too. So if you want to stop worrying about plagiarism and just get the stats done right, message me. I’ll help you out with work that’s honest and done properly. Because your grade matters and so does trust.

Authentic Work Checked With AI & Plagiarism Tools

When your doing assignments or reports for uni, it’s not just about finishing the work Graphs Data Visualization it has to be original. That’s why I always deliver authentic content and check it with AI tools and plagiarism scanners before giving it to you. No reused answers, no copying from random sites. Whether it’s a stats paper, coding report or data writeup, it’s created based on what you need. I run checks through Turnitin-style tools and AI detection platforms to make sure nothing gets flagged. If you want, I also send a report with the results so you know its clean before you submit. Code, text, graphs all verified. That’s why I don’t take shortcuts. So yeah, if you want help that’s safe to submit and actually original, you’re in the right spot. No copy-paste, no stress, just proper work.

Zero Copying, Unique Analysis and Custom Solutions

If your tired of copy-paste work and same boring answers, then you’re in the right place. I focus on zero copying, real analysis and custom solutions made only for your project. No templates, no reused files, and no internet shortcuts. Every assignment I do is writed fresh from code to graphs to explanations. Whether it’s regression, case study or research data, Descriptive Statistics EDA I make sure it match your topic exactly. Say you got a unique dataset? I don’t just plug it into some old model. I build the solution around your variables, with proper logic and interpretation that makes sense. And I don’t use random examples just to fill space. Everything is checked for originality too. Turnitin-safe, AI detection-safe, and professor-approved. So you don’t have to worry about getting flagged. So yeah, if your assignment need something that’s actually made for you not copied from somewhere else let’s talk. You’ll get something different, something that works, and something that shows real effort.

Mistake-Free Assignments With Accurate Referencing

Tired of loosing marks cause of small mistakes or wrong citations? I offer mistake-free assignments that also includes correct referencing in whatever format your university ask for. From stats reports to research papers or case analysis Do File Coding Automation I check everything for errors. Grammar, formatting, calculations, charts all double checked before I send it. So you don’t end up fixing stuff last minute. I also make sure your referencing is done right. APA, Harvard, MLA, Chicago whatever your instructor need, I follow that style. In-text citations and reference lists are included and not left out like some people do. Many times students do all the work right but still get points cut for missing references or bad layout. I don’t let that happen. The work I give is neat, correct, and easy to submit. So yeah, if you want your assignment to be not just correct but also fully cited and clean, let me know. You’ll get something that looks good and scores better.

Custom Statistics Reports With Graphs, Interpretation & Data Cleaning

Need a stats report that actually looks good and make sense? I do custom statistics reports with graphs, interpretation and full data cleaning just how your professor or team wants it. Whether it’s SPSS, Excel, R or Python, I take messy data and turn it into something solid. I’ll handle the missing values, Hypothesis Testing Diagnostics fix weird outliers and clean up the stuff most people ignore. Because if your data’s messy, your results gonna be wrong too. You’ll get neat visuals like bar graphs, boxplots, scatter diagrams, or whatever suits the data. But I don’t stop there I also explain what each thing means in normal words, so your reader actually understand what’s going on. I’ve done these reports for uni students and business teams both, so I know what kind of format works. Whether it’s APA style or just clear layout, I got it. So yeah, if your stats file still looks like a mess, let me fix it. You’ll get something clean, clear and marks-worthy.

Clean & Organized Dataset With Proper Labeling

If your dataset is messy and not labeled properly, your analysis probably gonna be wrong too. That’s why I offer cleaned and organized data with right labeling so it’s ready to use. I work with Excel, SPSS, CSVs, Python files and more. I remove missing values, fix formatting issues, clean duplicates and rename the variables so they actually make sense. I don’t just clean the data, Statistics Assignment Help I also make it look proper. Labeling is more important than people think. If your column is named like ‘Var1’ or ‘Untitled’, that’s no help to nobody. I make sure all column names and entries are neat and match what your analysis need. This kind of work saves you tons of time later and your teacher or boss will understand it better too. Whether it’s for assignment, thesis, or just group project clean and good-labeled data makes everything easier. So yeah, if your file is looking like a mess, send it to me. I’ll sort it out and give it back clean and ready.

Visual Charts Using Excel, R, SPSS, Python & Tableau

Making good charts ain’t just about adding colors or shapes it’s about making your data look smart and easy to understand. I make all kind of visual charts using tools like Excel, R, SPSS, Python and Tableau. Whether your working on a class project, a report or presentation I got you. I can do bar, line, pie, scatter, heatmaps and more. In Excel, I use pivot tables and filters. With R and Python, I use ggplot and pandas stuff to make visuals that look like professional. If you’re using SPSS, I generate the output and help label the graph so it don’t confuse your teacher. Tableau dashboards? Yep, done that too simple or interactive. I don’t just dump the chart and leave. I also tell you what it means, Survey Data Biostatistics Epidemiology Survival Models so you don’t guess when writing about it. So if your data just look like a boring sheet full of numbers, let me help you change that into visual that people actually get. Fast, clean and not a headache to understand.

Full Explanation Written in Academic Language

When your doing a stats project or research report, just running the test isn’t enough. You also have to explain what them results mean in proper academic tone. That’s where I help. I write full explanations in a clean academic language that sounds professional and matches university style. No basic phrases like it goes up or this is good. Instead, I write stuff like, The coefficient shows a significant relation, or Findings support the alternate hypothesis at 5% level. I also link the results to your objective and write it in the way that’s fit for business, health, Thesis Research Output Interpretation Reporting engineering or whatever subject you’re studying. APA, MLA, or Harvard I format it how your professor wants. If your data looks right but the writeup don’t make sense, I’ll fix it. Every explanation is clear, logic-based and fits the research question. So yeah, let me help make your writing match the quality of your analysis. Clear, smart and totally academic.

About Stata Homework

Your Academic Partner in STATA Analysis

StataHomework is a specialized academic support service dedicated to helping students and researchers perform accurate statistical analysis using STATA.

Our experts provide complete assistance with do-file coding, data cleaning, visualization, model estimation, and output interpretation for coursework, dissertations, and research projects.

From regression and panel data models to time-series forecasting, logistic analysis, and survey statistics, we ensure every solution is technically correct, clearly explained, and formatted according to academic requirements.

Our mission is to simplify complex STATA work and deliver results that are accurate, interpretable, and ready for submission.

Pay an Expert to Take My Stats Assignment or Online Exam

Let’s be real – not everyone has the time (or the nerves) to handle a tough stats exam or them never-ending assignments. You might be working, dealing with life, or just don’t wanna stress over formulas, chi-square, Time Series Analysis ARIMA Unit Root z-tests and so on. So if you’re thinking, Can I pay an expert to take my stats assignment or online exam?, you’re not the only one.

I’ve worked with students who needed help with tight timed exams, take-home tests, or crazy data assignments. I don’t just guess – I know what I’m doing. I cover all major topics: regression, ANOVA, t-tests, you name it. Even software like STATA, SPSS, R, Excel – I’ve got it all under control. You get reliable answers, on-time delivery and full privacy. Plus, if anything’s off or unclear, I’ll fix it. Mistakes can happen but I always set it right, quick. So yeah, if stats is messing with your GPA or your sleep, just reach out. You focus on your life, I’ll take care of the numbers.

Secure & Confidential Login Support for Exams

Worried about login into your exam portal without messing up anything? I offer secure and private help for students who got online tests, quizzes or LMS exams to deal with. The goal is to help you get through it smooth and stress-free. I’ve worked with platforms like Canvas, Moodle, Blackboard and even some custom university portals. I know how to handle login steps, stuck pages, Data Cleaning Management time countdowns and other tech issues. You can choose to get live help or let me guide you remotely whatever feels better. Your details are safe with me. I don’t save any login info or share anything. I work clean and quick so you can focus on your answers. A lot of students get confused or even miss exams just cause of login issues or sudden errors. Don’t let that happen. If you need support to enter your test safely and keep things smooth, I got you. No stress, no panic just real support when it counts.

Qualified Professionals Do Tests, Quizzes & Full Courses

Need help passing your online class, doing quizzes or finishing up tests? I work with a team of qualified people who can handle your academic work properly. Whether its a stats quiz, econ course or those weekly math assignments, we help you get it done with less stress. We use platforms like Moodle, Canvas, Pearson, Cengage and others. The person doing your test actually knows the subject, so it’s not just guess work or copy paste. That means you get better grades and less mistakes overall. Many students get busy with work or just find the class too hard and that’s okay. We take care of it from login to submission. Discussions, tests, midterms, projects all covered. It’s all private. No info shared. No accounts misused. So if your wondering, can someone do my course for me?, then yep we can. And we do it good, on time, and without drama.

Fast Delivery for Timed Assignments & Assessments

If you got a timed assignment or exam that need to be done fast, I can help. You don’t got all day to figure stuff out when there’s a clock running. I work fast, Panel Data Fixed Effects RE DID Models and I make sure things get done before it’s too late. Whether it’s multiple choice quiz, short test, or just some quick calculation I try my best to do it fast and not mess it up. I’ve helped many students who was in full panic cause they only had like 30 minutes left. Some systems even kick you out if you don’t answer in time, and that’s really stressful. That’s why I stick with you the whole time if needed, or just do it quick and send the answers back. I don’t waste time on long steps. I go right to the point, solve it, and move to next. You get clean answers, fast.

Live Support for Statistics Coursework, Projects & Case Studies

Stuck in the middle of a stats project and need help right now? I provide live support for all your statistics work coursework, projects, Regression Analysis OLS Logistic Models or even big case studies that just not making sense. Instead of waiting for someone to reply on forums or email, you can just message me and I’ll jump on live chat or call. I walk you through the steps, show you what to do, and explain the results in simple terms. You’re not just getting answers, you’re learning too. Whether it’s Excel, R, SPSS, STATA or even Python, I can help with whatever tool your assignment need. Need help cleaning data? Or stuck making a graph? Or don’t know what to write in the conclusion? I got you. This kind of live help is great for urgent submissions, group work, or when your supervisor ask questions you don’t get. So if your deadline’s coming fast and nothing is ready, ping me.

Nowdays, using right method for data analysis isn’t just important, it’s actually crucial. I use industry-accepted approaches that work in real research, business reports and college assignments too.

I’ve worked on many projects where methods like regression, hypothesis testing, PCA, Descriptive Statistics EDA or ANOVA made the whole difference. Sometimes students or professionals use wrong model and the whole results gets off. That’s why I carefully pick the method based on what your data is saying not just what sounds fancy.

Tools like Excel, R, Python, SPSS, and STATA is what I normally use. I make sure results are clean, organized and have proper output with explenation. Doesn’t matter if it’s for marketing, economics, business or health field the analysis will fit the context.

And if you’re not sure what to apply? No worries, that’s where I step in. You’ll get a analysis that’s not only correct but explained in simple, human terms so it makes sense in the real world too.

Working with data from business, marketing, finance or economics can be hard, specially when it’s messy or you don’t know which model to use. That’s where I come in I help simplify your files and get the results out clean.

For businessI work on things like operations, KPIs, and dashboards. I clean the data, add graphs, and explain the trends. If you got a case study or report, I’ll format it nice too.

In finance, I help with forecasting, returns, portfolio stats and risk stuff. Excel, Python, R, even SPSS no problem. I make sure your numbers are correct and easy to follow.

Marketing usually has survey data or social media stats. I help make sense of that using charts, segmentation, Do File Coding Automation or customer insights. For EconomicsI do regression models, supply-demand trends, and help explain theory side too.

So yeah, if your file look messy or your not sure what analysis to run, let me handle it. I’ll give you clean output and clear notes that make your work look sharp.

Need a report that actually match what you need, not just a boring template? I make custom reportsfor both academic and corporate use, depends on what you’re working on.

For students, I write reports for thesis, coursework, group projects or even journals. I add graphs, analysis, APA or MLA format, and everything your uni expects. Every section is done based on your topic and what your teacher is asking.

For companies, I make reports that focus on performance, sales, KPIs or customer survey results. I use Excel, Tableau, Python, etc. to turn raw data into something that’s easy to show in meetings or send to clients.

The visuals charts, tables, graphs all done neat and labeled. And if you want Word, Excel or PowerPoint format, that’s fine too.

So yeah, if your report needs to be clean and useful, I’ll help you build it. Academic or business, Graphs Data Visualization no fluff just a report that actually do the job right.

Get Help With College Stats, Business Analytics, Econometrics & More

Having trouble with college stats or business analytics? You’re not alone. These topics can be real confusing specially when you got other classes and deadlines too. That’s why I offer help with not just stats, but also econometrics, data analysis, business analytics and more. From basic charts to complex regression models, Hypothesis Testing Diagnostics I’ve helped many students figure out things they thought was impossible. If you’re stuck in Excel, R, STATA, or even Python scripts don’t worry, I’ll sort it out. Even SPSS and time series stuff. Econometrics projects with confusing panel data? So if your stats class feels like too much or the project deadline’s coming fast, just reach out. I’ll help you get it done proper and on time. No more stressing, just good clean work done by someone who’s done it a lot before.

Coursework Help for Business, Engineering & Health Sciences

I provide expert academic support that fits your subject and help you meet deadlines without panic. For Business, I assist with things like finance, statistics, case studies and analytics assignments. Whether you need Excel models or a write-up that makes sense, I can do it. If you’re into Engineering, I help with MATLAB stuff, design reports, simulation outputs, data analysis and even those confusing graphs. I’ve helped students in mechanical, electrical and industrial streams. Health Science students can get help with biostats, clinical research papers, SPSS results, and more. I explain results so they’re easy to understand not full of confusing terms. You’ll get clean, properly formatted work that’s made to match your assignment. Plagiarism free, referenced (if needed), and on time. So yeah, if your coursework is stressing you out, or you just don’t know how to begin send it over. I’ll help you finish it, and do it right.

Assistance With Market, Survey & Financial Data

Handling market data, survey results or financial numbers ain’t always easy. Specially when you don’t know how to clean it, model it or explain the results. That’s where I help. I offer stats help for market research, survey data, and financial datasets so you can stop guessing and get proper answers. Whether it’s a uni project, a business file, or a report for your job, Statistics Assignment Help I’ll handle the full thing clean the data, run the analysis, make graphs, and explain the findings. Tools I use include SPSS, R, Excel, Python and STATA. You just send me the file, I do the rest. With survey data, I help with coding, fixing missing values, finding trends and making visuals. For market data, I do segmentation, correlation, and forecasts. And if it’s financial data I can work on time-series, returns, risk and other finance models. The report you get will be clean, simple to follow, and ready to submit. So yeah, if your data’s stressing you out or you got no idea where to begin, let me handle it. You’ll get results that actually make sense.

Research Paper Support With Proper Modeling & Interpretation

Doing a research paper with statistics and don’t know if your using the right model? You don’t need to take chances. I provide support for research papers that includes modeling, data analysis and explaination of what the results actually mean. Whether you got a regression, ANOVA, t-test, or panel data setup I can help you pick the right method and do it correctly. I use STATA, R, SPSS, Excel and Python depending on what your uni or supervisor ask for. Lots of students get stuck not on the analysis part but the part where you gotta explain what the numbers mean. That’s where I come in. I break it down in simple words, linked to your objective or hypothesis. Also, I can do formatting in APA or other styles if you need it for journals. So yeah, if your paper has statistics and you feel lost with the models, don’t leave it to luck. Reach out and I’ll help you from the stats part to the final interpretation, clear and done right.

SERVICES

Our Exclusive Services We Offer For You

Data Cleaning Management

We deliver clean, accurate, and reliable data for smarter business decisions.

Panel Data Fixed Effects RE DID Models

Expert estimation and interpretation of Fixed Effects, Random Effects, and DID models for accurate panel-based causal insights.

Regression Analysis OLS Logistic Models

Get expert help in Regression Analysis using OLS and Logistic Models for accurate, reliable, and decision-driven results.

Descriptive Statistics EDA

Get expert Descriptive Statistics & EDA services that transform raw data into crystal-clear insights for smarter decisions.

Do File Coding Automation

Streamline your workflow with professional Do-File coding and automation services for fast, error-free data analysis.

Graphs Data Visualization

Unlock powerful insights with premium graphs and data visualization services that turn complex numbers into compelling stories.

Hypothesis Testing Diagnostics

Get reliable Hypothesis Testing & Diagnostics services that deliver accurate, evidence-based conclusions for smarter decision-making.

Statistics Assignment Help

Score top grades with expert Statistics Assignment Help that delivers accurate solutions, clear explanations, and timely results.

Survey Data Biostatistics Epidemiology Survival Models

Access premium Survey Data, Biostatistics, Epidemiology & Survival Models services for precise analysis, reliable outcomes, and research-ready results.

Thesis Research Output Interpretation Reporting

Get professional Thesis & Research support with expert output interpretation and clear reporting for publication-ready results.

Time Series Analysis ARIMA Unit Root

Unlock powerful forecasting with expert Time Series, ARIMA & Unit Root services designed for precision, reliability, and breakthrough insights.

Get Statistics Homework Help in R, SPSS, STATA, MATLAB & Python

Stuck with your stats homework? Don’t worry, your not the only one and trust me, you’re definetly not alone. I give real expert-level stats homework help using R, SPSS, STATA, MATLAB, and Python, shaped according to your assignment, Survey Data Biostatistics Epidemiology Survival Models dataset and deadline. Be it regression stuff in STATA, ANOVA in SPSS, or Python coding, I got your back.

Many students, they try YouTube vids, Reddit, classmates but still end up lost. That’s where I come in. I don’t just hand over answers, I explain the logic too (if you want, that is). You get clean scripts, right outputs, and explaind results. Need graphs or APA styled tables? Yep, those too. I’ve worked with students from big name universities, and I know how to hit the target. So, if your deadline’s coming fast and nothing makes sense, hit me up. Let me turn that messy stats work into a submission-ready file. Fast. Clear. Done. Stats doesn’t have to be scary. Let’s fix it together.

Code, Outputs, Graphs & Full Data Analysis

Want everything done in your stats project? I provide code, outputs, graphs and full data analysis in one single go. You don’t need to worry about jumping between tools or guessing how to explain the output. I use Python, R, SPSS, STATA, Excel and other tools depending on what you need. Code is writed clean and made to match your assignment or topic. I do the cleaning, the testing, and all the stuff that needs to be done to make it complete. Graphs are not just pasted in they actually show what matters. You’ll get things like scatter plot, line charts, histograms, Thesis Research Output Interpretation Reporting and other visuals that helps explain the numbers. And yeah, I also give interpretation. Like, I don’t just run the model and leave you hanging. I explain the results in simple words so you can write your report without panic. So if your deadline’s close and your data is just sitting there, send it over. I’ll do the full thing from scratch no recycled stuff, just clean stats that makes sense and actually works.

Clean Datasets With Explanation of Each Step

If your dataset is messy, your analysis won’t be correct. That’s just the truth. Missing values, wrong formats, weird outliers these things can mess up the whole results. I offer data cleaning that not only fix these problems, but also explain what I did at every step. I work with Excel, SPSS, R, Python and STATA. Whatever file you got, I’ll open it, scan it and clean it properly. I remove duplicates, fix missing stuff, correct data types, and do other things needed to make it work. But more than that, I don’t just give you the clean file. I also tell you what I did and why. Like, if I deleted rows or changed formats, you’ll know why it was important. This is very useful if your doing a research paper or project where the teacher ask why certain things was removed or replaced. So yeah, don’t just guess when it comes to cleaning data. Let me help make it ready for real analysis clean, clear and with full explanation.

Support for Logistic, OLS, Time Series & Machine Learning Models

Whether your working on logistic regression, OLS, time series or even some machine learning project, I can help you sort it out fast and correct. I don’t just throw numbers I explain the models, steps and why we using them. In logistic, I explain odds ratios and how to read coefficients. For OLS, I check assumptions, show how to build the model and what R-square is saying. I also help pick right variables so your model don’t overfit. Time series stuff like ARIMA or SARIMA can be confusing. I help with making the data stationary, choosing lags, and also building forecast charts that make sense. And if you doing ML models like decision trees or random forest, Time Series Analysis ARIMA Unit Root I can help train, test and explain accuracy. I use STATA, R, Python whatever your course or research needs. And I try to write comments in code so you can follow later. So yeah, if you stuck on your model or just want someone to help you understand better, I’m here to make it easier.

Best Experts for Probability, Regression, Hypothesis Testing & Data Analysis

If your stuck on probability, confused by regression outputs, or lost in hypothesis testing you’re definetly not alone. These topics can get real tricky, Data Cleaning Management and most students struggle to get them right without some proper help. That’s where working with experienced experts actually makes a big difference. I’ve supported tons of students and even working people on tasks involving everything from coin toss questions to big regression models and p-values that make no sense. I don’t just solve it, I explain it too so you understand what’s happening. Using SPSS, STATA, R, Excel or even Python, I’ll help you figure out the logic behind the numbers. Whether it’s a simple t-test or a full data analysis, the support is done to match your level and your prof’s expectations. So, if stats is stressing you out, don’t waste time googling stuff that don’t help. Reach out and let’s get it sorted. With the right help, even hard data stuff gets easier.

Specialized Support for Distributions & Inferential Stats

Distributions and inferencial stats really confuse many students, I seen it happen alot. When you pick the wrong distribution, the whole result might go off. People often just assume everything is normal distribushun which is not the case most time. I seen folks use t-tests on skewed data or apply z-test with no idea about the population stdev. Same with binomial and poisson, if you don’t know what your data is doing, it’s easy to make mistake. I always tell clients, don’t rush to test unless you know the shape of your data. When it comes to inferential stats, people gets caught up in p-values but forget what they even means. That’s why I slow it down. I explain CI’s, margins, errors and all that. Sometimes, non-parametrik tests is better, but people scared of them cause it sounds harder. So yeah, if your stats class feels like a mess, I can help. I explain each part like we’re sitting at a coffee table. That’s how I make stats make sense.

Help With Regression, ANOVA, Chi-Square & Correlation

Stats stuff can get real confusing when you dealing with regression, ANOVA, chi-square and correlation all at once. Lots of students, they don’t even know where to start. I’ve been through this over and over with folks and I know exactly where people trip up. Regression? People think it’s just a straight line, but they forget to test assumptions. Same for ANOVA you can’t just throw it in, you gotta check for homogeneity and stuff. I always remind my clients, Panel Data Fixed Effects RE DID Models don’t rush it. Chi-square’s easy to mess up too. Folks forget it needs expected counts to be high enough, or else the whole result’s shaky. And correlation? Too many think it means causation which it don’t. So yeah, I don’t just hand over the results. I actually go step by step, walk you through what each test means, why we used it, and what the numbers say. That’s what makes the grade, trust me.

Accurate P-Values, Confidence Intervals & Effect Sizes

In stats, it ain’t just about running a test and copy pasting the output. The real thing is knowing what them numbers actually mean. I always make sure to include correct p-values, confidence ranges, and effect sizes so your work is legit. I seen folks report a p-value and say ‘it’s good enough’ but they don’t explain what it says. Like, just coz p = 0.03 don’t mean it’s important. You gotta look at confidence interval too it shows how sure we are, and how wide the guess is. If CI is too wide, your results might not be stable. Effect size? That’s even more important sometimes. A tiny difference can be significant in big data but means nothing in real life. I always highlight that part and make sure its explained clear. If you feel stuck with interpreting all that, or your professor asked for more explanation, I can fix that. I’ll make the outputs make sense, not just for you but for whoever’s grading it too.

Secure & Confidential Take My Stats Class Service

Scared someone gonna find out you got help for your stats class? Don’t be. My Take My Stats Class service is fully private and safe. I know how important your privacy is and I keep things lowkey. When you hire me for your stats course or online exam, your info is protected all the way. I don’t share logins, I don’t re-use work, Regression Analysis OLS Logistic Models and I don’t keep anything that links back to you. Everything is handled without noise. No emails, no leaks. I take care of full classes, weekly homework, tests, even discussion boards all in your name. Whether you using Canvas, Moodle, Blackboard or any other thing, I’ll make sure it gets done smooth and right. Many students feel nervous getting help cause they don’t want to be caught. I get it. That’s why everything I do is quiet, clean and pro. You’ll get good marks, but no one will know how.

Sharing login info for your online class or stats assignment sometimes feels weird, right? I totally get it. But don’t worry, Descriptive Statistics EDA I use safe login sharing methods and our communication is always secure and private. When you send your login, I only use it to finish the work. I don’t mess around with anything else on your account. After your task is done, you can just change the password. I don’t save any logins or write them down anywhere. I use encrypted chats or secure platforms to share files and talk about the work. Not regular email or stuff that can be seen by others. Your stuff stays between me and you. Many students feels nervous about sharing login, and I understand that. But I’ve been doing this for long time and never had any trouble. No misuse or anything shady. So if your worried about keeping things safe but still want help, this is the place. I’ll do the work, your login stays safe, and nobody else even knows about it.

If your gonna pay someone to do your stats work, you should at least know they actually qualified. That’s why I only work with real experts who have verified degrees and proper academic background in stats, math, econ or data science. We’re not just hiring random people from internet who watched YouTube videos. These are people who studied this stuff, got Masters or PhDs, and know how to use tools like SPSS, R, STATA, Python and Excel properly. Every assignment is done from zero — no copying templates or using pre-made answers. The experts pick right models, clean the data, run the test, and write it in a way that your teacher won’t question. If your professor ask for explanation or source, no problem. We write it in clean way and give proper interpretations too. So yeah, Do File Coding Automation don’t risk your grades with people who don’t know what they doing. When you get help from us, you know it’s from people who actually studied stats and know what matters.

When you reach out for help, you don’t wanna stress about your info being shared. That’s why I offer private support where your name, assignments or login details are never given to anyone. Ever. I know how risky it feels to trust someone online. So I made sure everything stays between just us. Once your assignment is done, I don’t keep your file or store your name. I don’t post work anywhere or reuse it for someone else. You won’t get any emails, no one will know you even got help. It’s direct, safe, Graphs Data Visualization and quiet. Even the chats or messages stay private. No leaks, no nonsense. Many students don’t ask for help cause they scared of being caught or exposed. I totally get that. That’s why your privacy is something I take serious every time. So yeah, if you need academic help but don’t wanna take risk, you can trust this process. Your info don’t go nowhere, and your work will be handled like it’s my own.

CASE STUDIES

We Take Every Case Studies Very Seriously

Great Reviews for our services

Technical Statistics

Affordable Statistics Assignment Help for University Students

Being a uni student isn’t easy and stats assignments don’t really help, do they? Between classes, Hypothesis Testing Diagnostics part time jobs and all the other stuff, it’s tough to find time for confusing things like chi-square test or running STATA models. That’s why I offer affordable stats help that actually makes sense for students.

I’ve helped loads of students who just couldn’t afford high-end tutors or fancy platforms. My prices? Totally student-friendly. Whether you need help with SPSS, Python, R or MATLAB – or even just figuring out what your professor meant – I’m here for it. You’ll get correct answers, clean outputs and simple explainations. Need graphs or APA tables? Sure, no problem. No extra charge for things that should already be included. If price was stopping you before, it shouldn’t now. I make stats easier and cheaper, so you don’t have to stress. Send me the assignment, and I’ll help you through it quick, clear and without judgement. At the end, its about getting things done without feeling broke or lost.

Student Discounts & Budget-Friendly Pricing

Being a student means dealing with short deadlines and small budgets too. I totally get that which is why I try to give discounts and flexible prices for students who need help with assignments, courses or tutoring. You don’t have to pay crazy amounts to get good support. I’ve helped lots of students who was stressed but couldn’t afford the expensive stuff. So I try to keep prices fair, no weird surprise charges. You know what your paying for and what you’ll recieve. If you got more than one task coming up during the term, I also offer combo deals or semester-long support so you don’t spend too much. Even if something is due soon, Statistics Assignment Help I still try to keep it affordable. Discounts are there for new users, repeat clients and even when you refer a friend. Just tell me what you need and I’ll try to adjust. Getting help shouldn’t cost a fortune. If you want quality support with student rates, I’m here to sort you out.

Free Revisions Until You Fully Happy

When you ask someone to do your work, you should feel relaxed. That’s why I always offer free revisions no extra money, no questions, no limit on how many. If something don’t look right, I fix it. That’s just how I work. Sometimes the teacher give some feedback later. Or maybe you read it again and feel something is not how you want. No problem. Just message me and I’ll do the changes. Usually I send back updated file in few hours. It’s not always about getting it perfect in first go. It’s more about getting it right at the end. I stay available after delivery to help make the file exactly how you want it. That’s why many students trust me again and again. So if you been disappointed before by people who stop reply after payment, you won’t face that here. I stay till the end, till you’re good with what you got.

Trusted by College & Graduate-Level Students Worldwide

I’ve been helping college and grad-level students from different countries for many years now. Doesn’t matter if it’s from Ivy League, UK schools, or small online programs students trust me to get their academic work done good and on time. From last-minute assignments to long projects, I’ve seen and done alot. Many students keep coming back cause they know I take the work serious. I ask questions, follow their style, and treat their work like it’s important because it is. I’ve helped with stats, psychology, economics, Survey Data Biostatistics Epidemiology Survival Models public health and more. I don’t give copy-paste answers I do the work custom based on what the student’s school or course is asking. Students from USA, Canada, Australia, UK, Europe and even Asia have used my help. And most of them said they was happy and came back again. So yeah, if your looking for someone who knows what they doing and keeps your info private, I’m here to help you out.

Take My Online Statistics Class, Quiz, Test or Full Course

Got a online stats class and just can’t keep up? That’s where I come in. You can actually hire someone to take your stats class, quiz, test even the full course, no joke. I’ve helped many students who was about to fail or just didn’t want to deal with the headache. Weekly assignments, midterms, finals, timed quizzes I’ve done it all. I know how to navigate platforms like MyStatLab, Thesis Research Output Interpretation Reporting Moodle, Canvas etc. From basic data stuff to advance regression, I’ll handle it with care. All your work will be submitted on time and done right. No missing grades, no guess work. And don’t worry, everything stays totally private. If you’re already behind or just tired of stressing, send over the login and let’s fix this. You focus on other things, I’ll deal with the stats mess. Simple as that.

Full-Course Handling With Weekly Progress Reports

Managing a whole statistics course can be overwhleming, especially when you’ve got other assignments, Time Series Analysis ARIMA Unit Root deadlines and may be even a part-time job. That’s why we offer complete full-course support – from first quiz to final exam. Our professionals doesn’t just help with individual tasks, they take care of the full timeline. Weekly proggress update keeps you in the loop so you always know what’s been done, what’s due, and where you’re standing grade-wise. Clients love the transparency. It’s not just ghostwork – it’s a partnership. We use your syllabus, LMS access (if available), and class patterns to stay aligned with instructor expectations. Missed a lab? We’ll cover it. Struggling with time series? We’ll tutor you or handle it. You don’t have to micro-manage us, just give us the roadmap, and we’ll drive. Studnets from best colleges trust us with their full-course loads every semester, because we deliver – quietly, acuratly, and on-time. If you need peace of mind, expert support, and regular updates, then full-course handling with weekly check-ins might be the smartest move you make this term.

Help for Blackboard, Canvas, MyMathLab, Pearson & More

Trying to handle everything on Blackboard, Canvas, MyMathLab, Pearson it’s honestly too much sometimes. That’s why I help. Straight up, Data Cleaning Management I go into your course dashboard, check what needs to be done, and I get on it. Whether it’s a tricky quiz in MyMathLab that’s timed and you don’t wanna mess it up, or Pearson assignments that are way too confusing for what they should be, I’ve dealt with this stuff so many times. People always ask, Can you actually do it safely? and yeah, I do. I keep logins safe and confidential. You won’t have to worry about your info, or your work not getting submitted on time. If you’ve got Canvas discussions due, or Blackboard tests coming up just hit me up. I’ll sort it. You’ll have less on your plate, and more time to actually breathe.

High Grades Guaranteed or Refund Options Available

When students come to me, they usually got this one worry sitting at the front of their mind what if the work doesn’t score good? I totally get that. That’s honestly why I made my service with real commitment in mind. If I’m doing your work, it should get high grades. If not, then what’s even the point? I’ve noticed, when students know they’re protected, they calm down. They can focus more, stop stressing so much. That’s exactly why I give high grade guarantee or simple refund if things don’t go as expected. No weird rules, no small print nonsense. Just straight up honesty. I do my part, and you get the results you paid for. Lot of students say this makes them feel safer taking on tough courses or short deadlines. And well, when you’re stuck or falling behind, this type of backup really does help. You’re not risking your money on a maybe.

Hire a Statistics Solver for Excel, R, Python, SPSS & STATA Projects

Need someone to solve those tough statistics problems in Excel, R, Python, SPSS or STATA? You’re at the right place. I offer expert-level stats solving for students and researchers who just need it done right. Maybe your working on a regression model in STATA, or a messy dataset in SPSS, or even a time-series thing in R. No worries I can handle all of that. I clean the data, choose the model that works best, and give you results that look good and make sense. Excel stuff? Yeah, I do that too. Pivot tables, analysis toolpak, Panel Data Fixed Effects RE DID Models even Solver and graphs whatever your spreadsheet needs. I don’t just throw answers back at you. I try to explain the steps so you get what’s going on. All work is done from scratch, private and on-time. So if your stats task is making you stress out or the deadline’s coming too fast, let me help. I’ll make sure you get a clean submission that don’t get flagged and actually help your grade.

Professional Analysts Solve Complex Data Problems

Data problems are tough, specially when they get messy or don’t make no sense at first. People try many things, but still stuck with errors, missing info, or wrong results. I’ve done lots of projects where clients were like, I have this big dataset and I don’t even know where to begin. And that’s ok! You’re not suppose to be expert in everything. I jump in, look what’s wrong or missing, Regression Analysis OLS Logistic Models then apply the right tool sometimes it’s regression, other times it’s just about cleaning stuff and visualizing properly. Excel, Python, R, SPSS… I use whatever needed. But tools are just part of it. Real skill is knowing what question to ask and how to make the data speak. I also explain the steps, so you’re not left guessing what happened. If you got a problem in your assignment or real work and it feels just too much don’t stress. I got your back.

Macro & Script Coding for Automation

If you’ve ever found yourself repeating the same task again and again copying values, formatting stuff, clicking through a dozen steps just to get to the same place yeah, that’s where automation can really help. I write macros and scripts that turns boring, manual work into one-click shortcuts. In Excel, I use VBA to make macros that clean up messy data, build reports fast, and do calcs without errors. For bigger workflows, I use Python or even R scripts that run across different tools and save hours of clicking. It ain’t just about saving time it’s also about not messing things up. Scripts don’t forget steps, or make typos. Lot of times, people come to me like, Can this be automated? and 9 outta 10 times, yeah, it totally can. I’ve automated stuff like file renaming, sending emails, pulling info from websites, Descriptive Statistics EDA even changing file formats. So if you’re stuck doing same stuff over and over, let me help. We’ll build something once that works every time after.

Fully Explained Results With Graphs & Plots

Doing data is only half of the work, the real challenge is to tell what it mean. That’s why I always give you full results with graphs, plots and explanation. So you’re not just looking at random numbers but actual story from data. People sometimes show a chart but don’t even say what it means. I don’t do that. If there’s a scatterplot showing two things go up together, I’ll say it like, Do File Coding Automation this tells us there’s a strong link between A and B. Simple and smart. Graphs can be made in Excel, Python, SPSS or whatever you’re using. And I’ll write the part that explain the picture. You won’t need to guess what to say in your results part. So yeah, if you feel stuck with what to write after analysis, or graphs looking blank to you, let me handle it. You get the full thing visuals, writing, and logic all in one clean and understandable package.

Meet Our Brilliant Minds

Our Leadership Team

Keith Martin

Data Cleaning Management

Dale Cunningham

Descriptive Statistics EDA

Lorna Finch

Statistics Assignment Help

Marie Wells

Graphs Data Visualization

Submit Your Stats Homework and Get It Done Before Deadline

Deadlines always come too fast specially when its a stats assignment that needs R coding, STATA models, Graphs Data Visualization or weird looking SPSS tables. If your stuck and the clock’s running out, just send me your assignment. I’ll make sure its done before the deadline, no stress.

I’m good at working fast, without loosing accuracy. Whether you’re working with Python, R, MATLAB, or just need to fix your dataset, I’ve done it all. You’ll get clean outputs, answers that make sense, and yes I’ll explain the results if you want too. Most students don’t want fluff. They just want it done, quick and right. That’s how I work. My clients often send work at 2am, and I’ve still helped them submit on time. Just upload the stats homework, tell me the deadline, and go chill. You’ll have it done, formatted, and ready to submit before your prof even sends that ‘final reminder’ email.

Urgent Same-Day & Last-Minute Completion Available

Deadline is today and you haven’t started yet? Don’t worry I do urgent, same-day and last moment help for assignments, labs, quizzes and more. Whether you forgot about a task or just didn’t had time, I can still get it done in few hours. Just because it’s last minute don’t mean it has to be low quality. I make sure answers are still accurate and formatted right. From quick math questions to full written report, Hypothesis Testing Diagnostics I cover it all fast. I’ve done assignments that was due in 2-3 hours and still came out great. If it’s doable, I’ll handle it without delay. But if the time too short, I’ll let you know honestly. No stress, no blaming just quick, reliable work when you’re in a jam. So if you’re looking at the clock and your heart’s racing, just message me. I’ll jump in quick and help you submit it before it’s too late.

Guaranteed On-Time Delivery With Quality Checks

Deadlines matter alot cause when your marks depends on it, you can’t really afford to be late. That’s why I make sure to deliver the work on time always. Be it a last minute quiz, short answer task or a whole report due in few hours, I do it fast and on point. But just doing it quick ain’t everything. I try to read through all the answers, recheck maths or code, and make sure it looks fine before sending. Even if it’s rushed, I still try to not mess up the important parts. I have helped many students with same-day stuff and didn’t miss deadline once. If something seems not possible, I say it early instead of promising and messing up. And if prof ask for changes or there’s a mistake, I fix it fast too. You don’t have to worry about small errors getting you in trouble. So, yeah if you need quick help that’s also careful, I’m your guy. Send your task, and I’ll take care of it soon.

Track Progress n Get Updates Anytime

Sometimes students tell me they feel stressed when they don’t know what’s happening with their assignment. That’s why I make sure to always keep you updated. I send messages regularly and share what’s done, what’s in progress, and what’s still to do. No guesswork needed. Once I start on your task or course, I break it into smaller chunks and start doing them one by one. Every time something’s done, I note it down, and you get to see what’s going on. I normally send weekly reports but if you want more often, I can do that too. Sometimes your teacher add something suddenly or deadline change without notice. When that happens, Statistics Assignment Help I let you know quick and adjust things. You won’t be surprised last moment. Many clients said they feel more calm when they get regular update, even just small ones. It helps knowing someone is handling it seriously. You don’t have to keep checking again and again. Just relax. I’ll keep you posted before you even need to ask.

Online Statistics Tutoring and Exam Assistance – Hire a Professional

When stats gets confusing and you don’t know what’s going on anymore, hiring someone who knows statistics well can really save the day. Online tutoring is super helpful when you just can’t figure out those regression things or weird graphs with symbols you don’t understand.

Exams are even worse, specially when time is short and your confidence is low. That’s why a pro tutor can help you prepare better, Survey Data Biostatistics Epidemiology Survival Models or even assist during your online quiz or timed test. From descriptive stuff to inferental stats like ANOVA or logistic model we handle it all.

Most tutors explain things step by step and show you how to do the problems instead of just giving answers. You can use Zoom, screen share, or even get help by messages.

So yeah, instead of guessing through your next stats test or just giving up, hire someone who can actually make you understand or get the job done right. Don’t leave it for last minute start early and get expert help.

1-on-1 Live Help With Real Expert

Sometimes you just need to talk to someone who actually know what they doing. That’s why I offer one-on-one live help sessions, with real experts. No bots, no pre-recorded video junk. Just actual people helping you, right when you need it. If you’re stuck on math homework, confused about business model, or just don’t get how to use SPSS or Excel, you don’t gotta sit alone guessing. In live session, we go straight to the point.

No time waste, no repeating easy stuff. We share screen, solve things together, Thesis Research Output Interpretation Reporting and explain what’s happening as we go. You can ask anything, stop me anytime, or even just watch while I do the hard parts. That’s cool too. Many students say it helps them a lot, especially when deadline is near. So if you want real help, by real person, in real time, book a 1-on-1. No drama, just clear help when you need it most.

Learn Using Real Examples, Graphs & Data Sets

When it comes to understand statistics, nothing helps better than working with real-life examples and relatable data. That’s why our tutoring and assignment support services focuses on using actual datasets, easy-to-follow graphs, and real research scenarios. It helps students visualise concepts better and not just memorize formulas. We make sure that each student see how the theory is applied in practice.

For example, instead of just learning a regression formula, we show how to use it with a Excel dataset or SPSS file to draw conclusions from data. You can see graphs that tell story of the data, not just numbers on paper. Many students struggle because they never see what data looks like or how to make it talk. We bring that data to life through colorful visuals and step-by-step walk throughs. Whether you’re doing a thesis, a term paper, Time Series Analysis ARIMA Unit Root or a timed quiz – real examples make the learning stick. Graphs, plots, tables and even mistakes are included in our lessons to help you learn deeply. You don’t just learn the how, but also the why behind every method. That’s how true mastery in statistics start.

Master Difficult Topics Faster With Guided Sessions

Sometimes, certain statistical topics just refuse to makes sense – no matter how many times you watch videos or re-read textbook. That’s exactly where our guided tutoring session comes to rescue. We specialize in helping students break down complex topic like time-series, regression models, ANOVA, machine learning algorithms and multivariable analysis into bite-size piece.

Our experts don’t just explain formulas they relate the concept to real-life examples and build your understanding step-by-step. If you’re stuck on a STATA do-file, Data Cleaning Management or can’t interpret logistic output, or confused why your residuals aren’t normal we walk you through every confusion, slow and clearly.

Many students tell us, I was trying to do it myself for 3 days and after 1 hour with your tutor, I finally understood. That’s the power of having a live person break it down, using screen-share, annotations and examples from your own dataset. Guided sessions are schedule-friendly too – you can book one in urgent deadline, right before exams, or weekly for ongoing course help. We don’t judge – whether you’re totally lost or just need a little push, we meet you where you are. Learning becomes faster, simpler and little less stressful that’s our promise.

Urgent Statistics Assignment Help With Guaranteed Results

Deadline coming fast and your stats assignment still ain’t done? Don’t freak out I offer urgent statistics help with guaranteed results. Doesn’t matter if it’s due in few hours or tomorrow morning, I can get it done quick and right. From simple charts to crazy regression problems, I’ve handled all kind of stats assignments. I use tools like SPSS, R, STATA, Python and even Excel. So yeah, Panel Data Fixed Effects RE DID Models whatever the platform or software, I can help you out. What most students need in rush situations isn’t just speed they want it done correctly. That’s why I make sure the answers are accurate and the work looks clean. No weird formats, no guess work. Just proper results. Even if the instructions are confusing or the dataset is looking like a mess, I won’t say no. I’ll fix it fast. So, if your clock is ticking and nothing’s ready, just send me your assignment. I’ll reply fast and handle it so you can chill knowing it’s being done by someone who actually knows what they’re doing.

Professional Analysts Solve Complex Data Problems

Data problems are tough, specially when they get messy or don’t make no sense at first. People try many things, but still stuck with errors, missing info, or wrong results. I’ve done lots of projects where clients were like, I have this big dataset and I don’t even know where to begin. And that’s ok! You’re not suppose to be expert in everything. I jump in, Regression Analysis OLS Logistic Models look what’s wrong or missing, then apply the right tool sometimes it’s regression, other times it’s just about cleaning stuff and visualizing properly. Excel, Python, R, SPSS… I use whatever needed. But tools are just part of it. Real skill is knowing what question to ask and how to make the data speak. I also explain the steps, so you’re not left guessing what happened. If you got a problem in your assignment or real work and it feels just too much don’t stress. I got your back.

Plagiarism-Free, Error-Free, Accurate Solutions

When it comes to school or even office work, nothing beats having something that’s original and right. That’s why I always make sure the stuff I deliver is totally plagiarism free and with no mistakes. I don’t really believe in taking shortcuts, I just believe in doing it clean and good. Every project or assignment I take, Descriptive Statistics EDA I write it new no copy pasting from old work or random stuff online. I do check it on plagiarism software and also go through the grammar, spellings, even the formatting part, so you don’t have to fix anything later. I’ve had people come to me after getting bad grades from wrong answers, copied work or silly typing mistakes. So if you’re tired of getting messed up stuff from random helpers, just give it to me. I’ll make sure it’s done properly no stress, no copying, just clean, original work you can feel good submitting.

Submitted in your Required Formatting Style

One thing I’ve learned in years of academic writing? Formatting really does matter. Not just looks, but it’s about meeting what they expect, passing checks, and honestly just showing that you know the system. That’s why, every single assignment or case study I work on is done and submitted exactly in the style you asked for APA, Harvard, MLA or, um, even IEEE. Lots of people, they come to me frustrated. Their papers got bounced back cause of missing margins or references being off or, well, Do File Coding Automation just not ‘looking right.’ So that’s the stress I take away. Sometimes your teacher gives a sample file. If that happens, I just copy that format closely, real close. And if not, no worries I go with the official guide. From Word styling to even LaTeX (if needed), it’s all sorted. So yah, if you’re tired of fixing formatting again and again, let me handle it. It won’t just be good writing, it’ll look the part too, properly.

Testimonial

Happy Customers

Testimonial

Recent From Blog

Expert Help With STATA Data Cleaning & Management Assignments

Messy datasets can ruin your entire analysis before you even begin. From missing values to mis-coded variables, STATA users often struggle to prepare clean data for accurate results.

In this blog, we explore practical tips, expert fixes, and automation tricks for smarter data cleaning and management.

Learn how proper preprocessing can upgrade your assignments, boost your grades, and save hours of frustration.

Latest Articles on Epidemiology, Biostatistics & Survival Models

Explore practical guides and insights on analyzing public health data using STATA.

Learn how to apply Biostatistics, Epidemiology, and Survival Models with real-world datasets.

From survey data cleaning to hazard ratios, our blogs simplify complex medical research techniques.

Latest Guides on Forecasting, ARIMA & Unit Root Models

Learn how ARIMA models improve forecasting accuracy using real-world data in STATA.

Understand Unit Root tests to check stationarity before running any time-series model.

Discover common errors students make in differencing, lag selection, and interpretation.

Explore step-by-step tutorials that turn complex time-series analysis into simple, practical results.